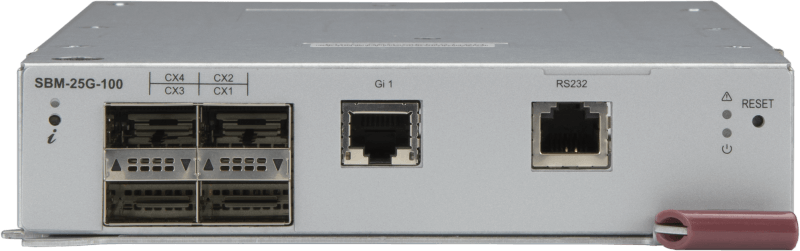

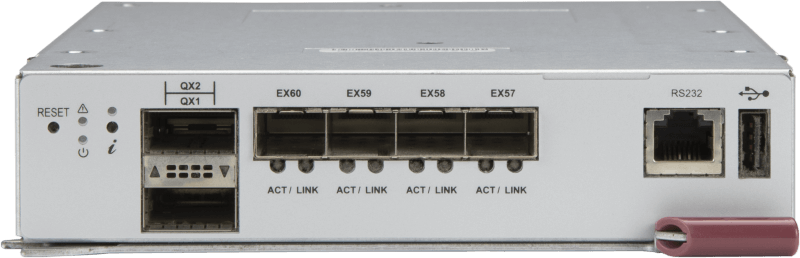

25G Ethernet

| General Specifications |

|

| Switching Capacity |

|

| Physical Layer Features |

|

| Layer 3 Features |

|

| Layer 2 Features |

|

| Advanced Layer 2 Features |

|

| Security Features |

|

| System Management |

|

| Multicast |

|

| Weight |

|

| Dimensions (W x D x H) |

|

| Download | |

* When used in SBE-610J and SBE-610J2, 1G Ethernet port is not available for uplink. * Uplink availability and supported port speed might be different between enclosures; please refer to “Connectivity Guide”. | |

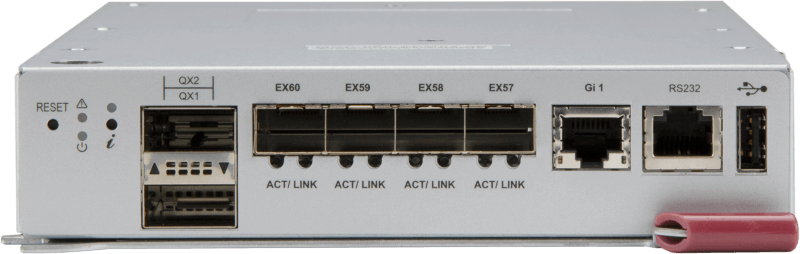

| General Specifications |

|

| Switching Capacity |

|

| Physical Layer Features |

|

| Layer 2 Features |

|

| Advanced Layer 2 Features |

|

| Security Features |

|

| System Management |

|

| Multicast |

|

| Automation |

|

| Weight |

|

| Dimensions (W x D x H) |

|

| Download |

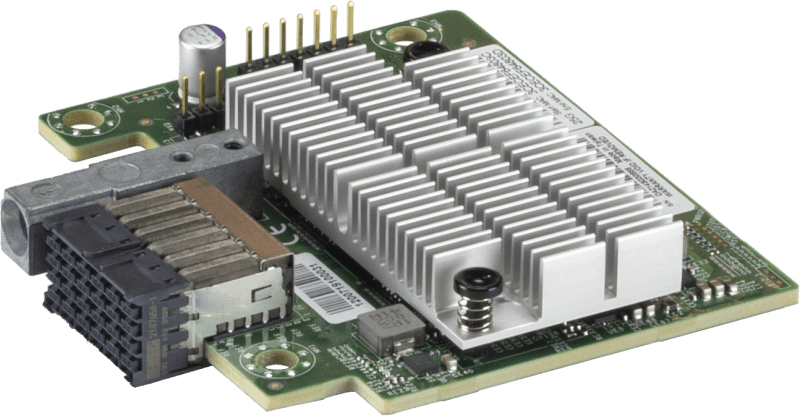

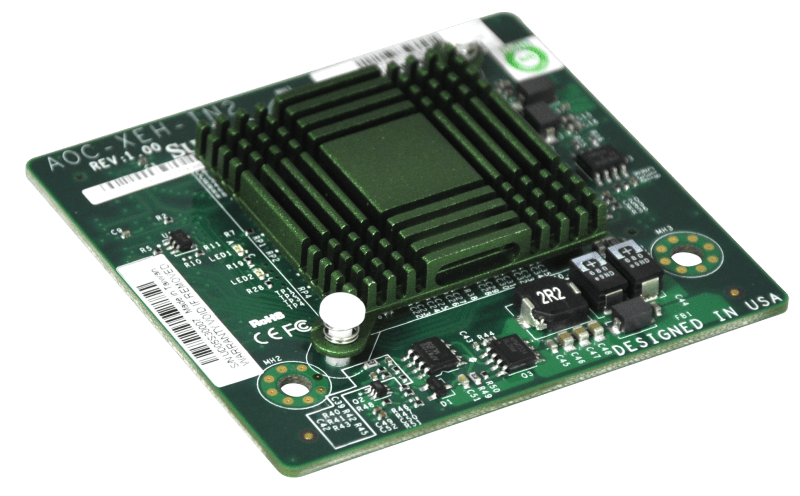

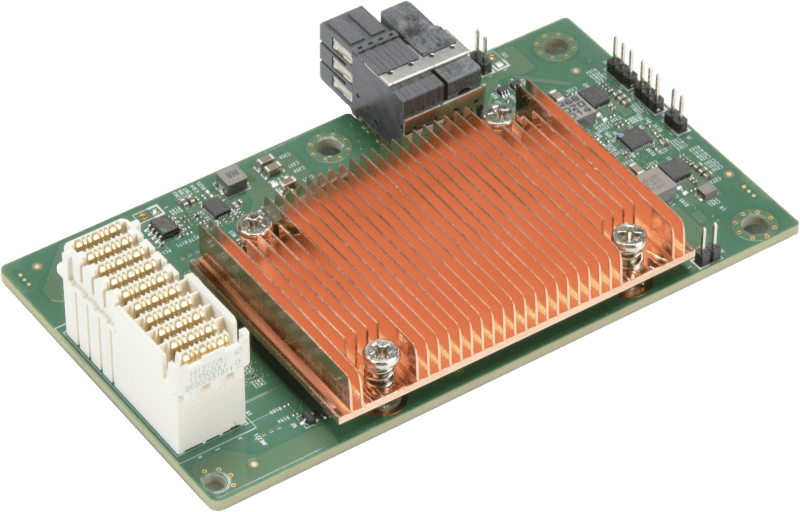

| Chipset |

|

| Ports |

|

| Power Consumption |

|

| Download |

|

10G Ethernet

| General Specifications |

|

| Switching Capacity |

|

| Physical Layer Features |

|

| Layer 2 Features |

|

| Advanced Layer 2 Features |

|

| Security Features |

|

| System Management |

|

| Multicast |

|

| Automation |

|

| Weight |

|

| Dimensions (W x D x H) |

|

| Download |

| General Specifications |

|

| Switching Capacity |

|

| Physical Layer Features |

|

| Layer 3 Features |

|

| Layer 2 Features |

|

| Advanced Layer 2 Features |

|

| Security Features |

|

| System Management |

|

| Multicast |

|

| Weight |

|

| Dimensions (W x D x H) |

|

| Download | |

* (MicroBlade) When used in a 6U MicroBlade enclosure the available uplink ports are 2x 40G, or 1x 40G and 4x 10G. | |

| General Specifications |

|

| Switching Capacity |

|

| Physical Layer Features |

|

| Layer 3 Features |

|

| Layer 2 Features |

|

| Advanced Layer 2 Features |

|

| Security Features |

|

| System Management |

|

| Multicast |

|

| Weight |

|

| Dimensions (W x D x H) |

|

| Download | |

* (MicroBlade) When used in a 6U MicroBlade enclosure the available uplink ports are 2x 40G, or 1x 40G and 4x 10G. * When used in MBE-620E, 1G Ethernet port is not available for uplink | |

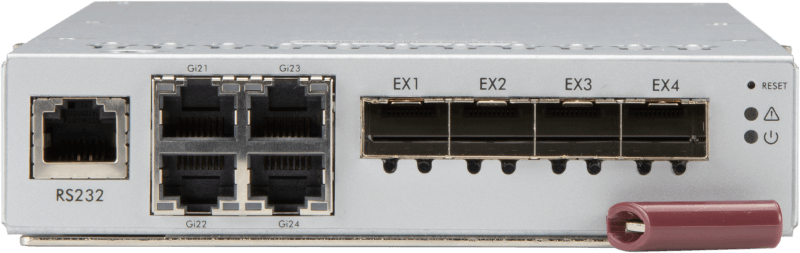

| Internal Ports |

|

| External Uplink Ports |

|

| Type |

|

| Switching Capacity |

|

| Trunking |

|

| Jumbo Frame Support |

|

| Remote Management |

|

| Protocols |

|

| OS |

|

| Download |

| Internal Ports |

|

| External Uplink Ports |

|

| Type |

|

| Switching Capacity |

|

| Trunking |

|

| Jumbo Frame Support |

|

| Remote Management |

|

| Protocols |

|

| OS |

|

| Download |

|

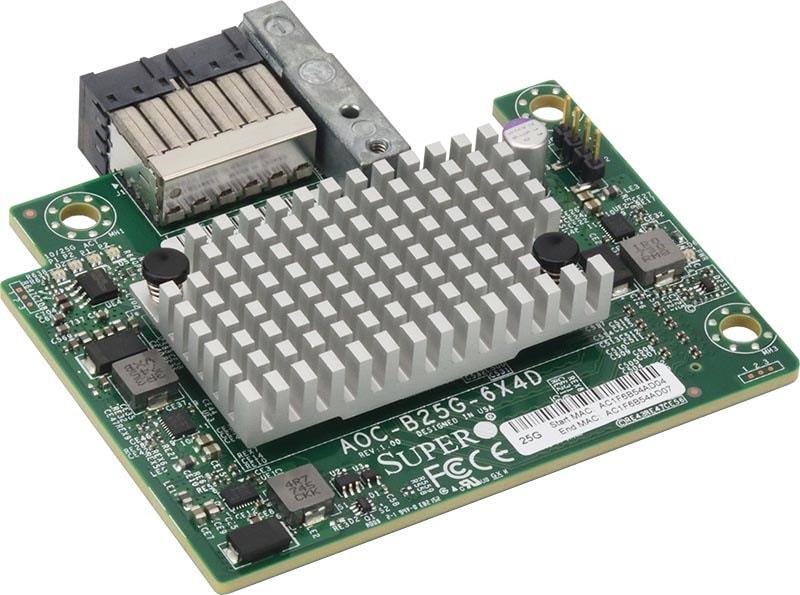

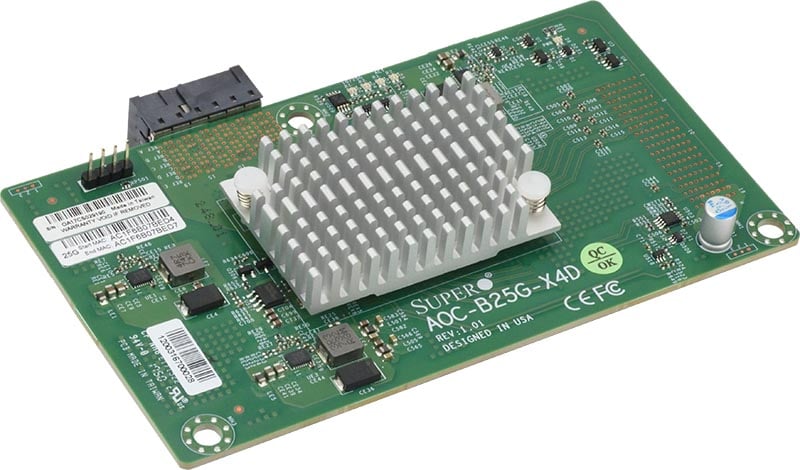

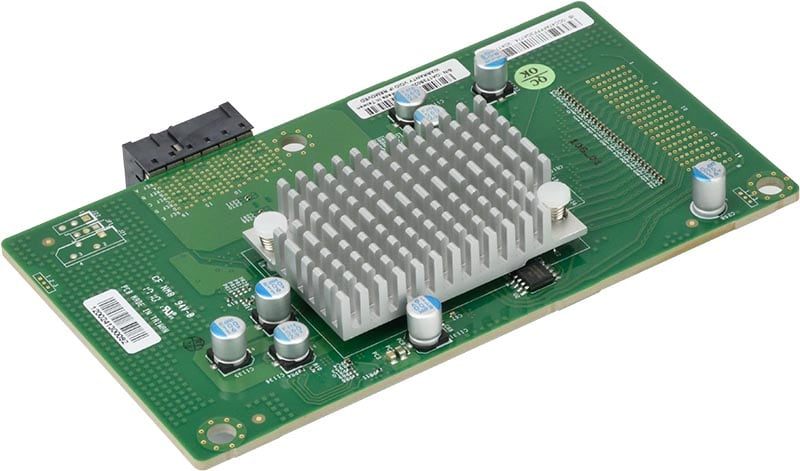

| Chipset |

|

| Ports |

|

| Power Consumption |

|

| Download |

|

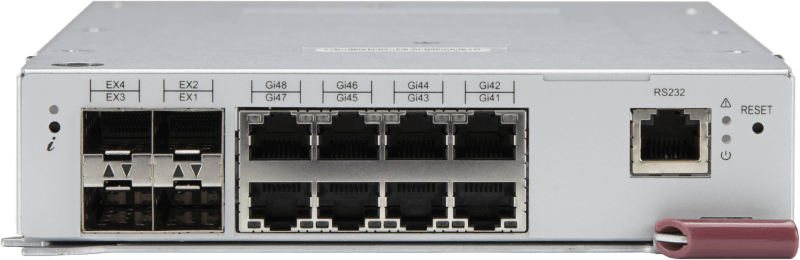

1G Ethernet

| General Specifications |

|

| Switching Capacity |

|

| Physical Layer Features |

|

| Layer 2 Features |

|

| Advanced Layer 2 Features |

|

| Security Features |

|

| System Management |

|

| Multicast |

|

| Automation |

|

| Weight |

|

| Dimensions (W x D x H) |

|

| Download |

| Internal Ports |

|

| External Uplink Ports |

|

| Type |

|

| Layer 2 Features |

|

| Advanced Layer 2 Features |

|

| Jumbo Frame Support |

|

| Remote Management |

|

| Protocols |

|

| OS |

|

| Download |

InfiniBand

| Chipset |

|

| InfiniBand Ports |

|

| Ethernet Ports |

|

| Power Consumption |

|

| Download |

|

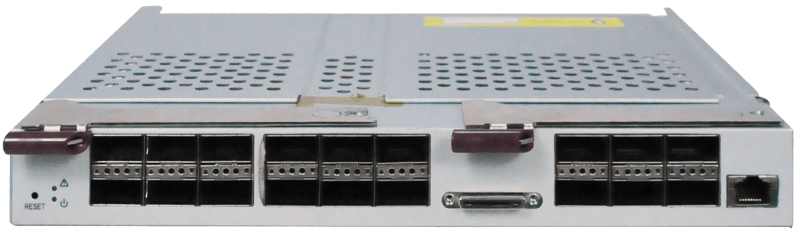

| Switch Chip |

|

| Internal Ports |

|

| External Uplink Ports |

|

| Bandwidth |

|

| Download |

|

Intel® Omni-Path

| Chipset |

|

| Omni-Path Ports |

|

| Power Consumption |

|

| Download |

|

Pass-Thru Module

| General Specifications |

|

| Partner Switches |

|

| Configuration Info | |

| Weight |

|

| Dimensions (W x D x H) |

|

| Download |